AI Proving Grounds

Test & Prove Your AI SOC in

the AI Proving Grounds

Train and test humans and AI agents together in a realistic environment before they reach live SOC operations.

Enterprise-Scale Realism

Production-grade environments that mirror live SOC operations

Training Humans and AI together

Refine AI models and agents alongside human operators with realistic cyber simulations

Live-Fire AI Agent Testing

Stress test performance under adversary pressure

Prove Human & AI Agent Readiness Before Production Deployment

AI agents rarely fail in clean lab environments. They fail in real security operations with noisy SOC workflows, under adversary pressure, and across complex escalation paths.

SimSpace provides the enterprise AI Proving Grounds where organizations conduct rigorous AI agent testing alongside human operators against realistic operational conditions. Measure performance using meaningful AI agent evaluation metrics, identify failure modes, and validate AI agents before deployment.

Before your AI reaches production, trust that it will hold up under real-world pressure.

The AI Proving Grounds Center of Excellence

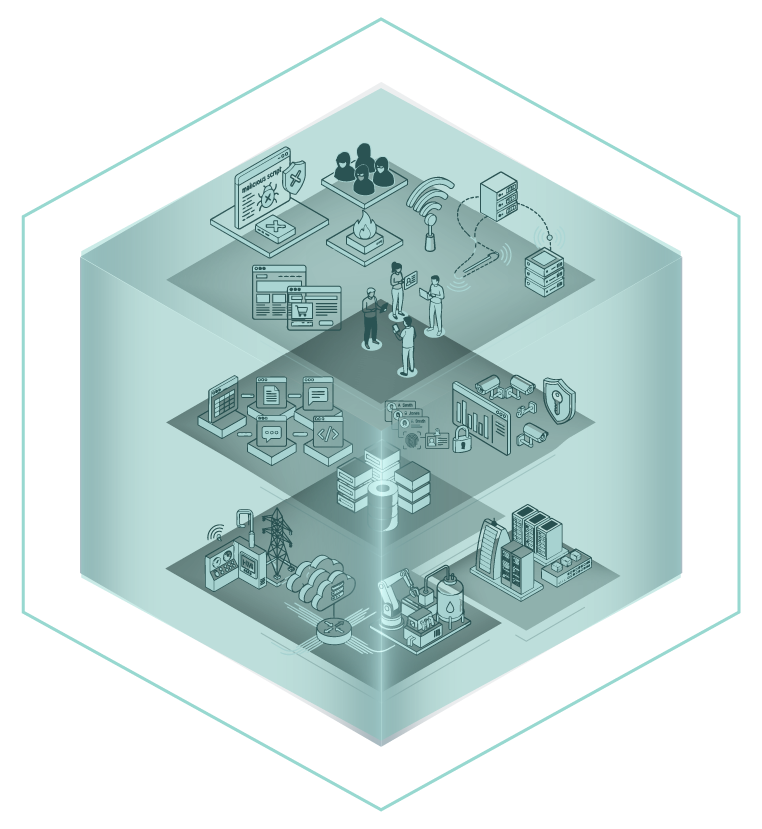

LAYER 3:

Activity

Users / Traffic / App Content / Attacks

LAYER 2:

Apps & Tools

Network / Identity / Endpoints / Data

LAYER 1:

Infrastructure

IT / Cloud / OT / IoT / ICS

EMULATE ANY ACTIVITY/ATTACK BEHAVIOR

CYBER INFRA THAT GENERATES AI AGENT TRAINING DATA.

DESIGNED TO OPTIMIZE AI + HUMANS JOINT PERFORMANCE

Generate synthetic, precision-labeled training data and retrain with customer data

By generating synthetic telemetry and attack data based on true enterprise conditions, teams can make their models and agents smarter and more predictive. Then, organizations can retrain models and agents with customer data, all without impacting production environments.

Test and validate agentic solutions

Test and validate autonomous AI agents operating within full workflows. Validate agentic performance results against realistic user and attack emulation and adversary behavior inside a replica of your environment.

Ensure agentic solutions are safe and trustworthy

AI introduces unique risks, such as context blindness and hallucinations, requiring rigorous safety and governance testing before deployment. By evaluating an agent’s policy adherence, escalation thresholds, and failure modes in a realistic cyber simulation infrastructure, security leaders can build operational trust and ensure AI decisions are explainable and secure.

Strengthen cyber resilience with human operators alongside AI agents

An organization’s cyber resilience requires proof that humans and AI systems can effectively operate together when it matters most. By training and testing inside the AI Proving Grounds, organizations can properly evaluate human and AI integration to prove that both human operators and agents can stand up against real threats.

قصة نجاح أحد العملاء: