Enterprise-Scale Realism

Production-grade environments that mirror live SOC operations

Live-Fire AI Agent Testing

Adversary-driven stress testing under operational pressure

AI Agent Evaluation Metrics

Precision, recall, mean time to detect, false positives

AI Workflow Validation

Tier 1 and Tier 2 automation assurance before deployment

Prove AI Agent Performance Before Production Deployment

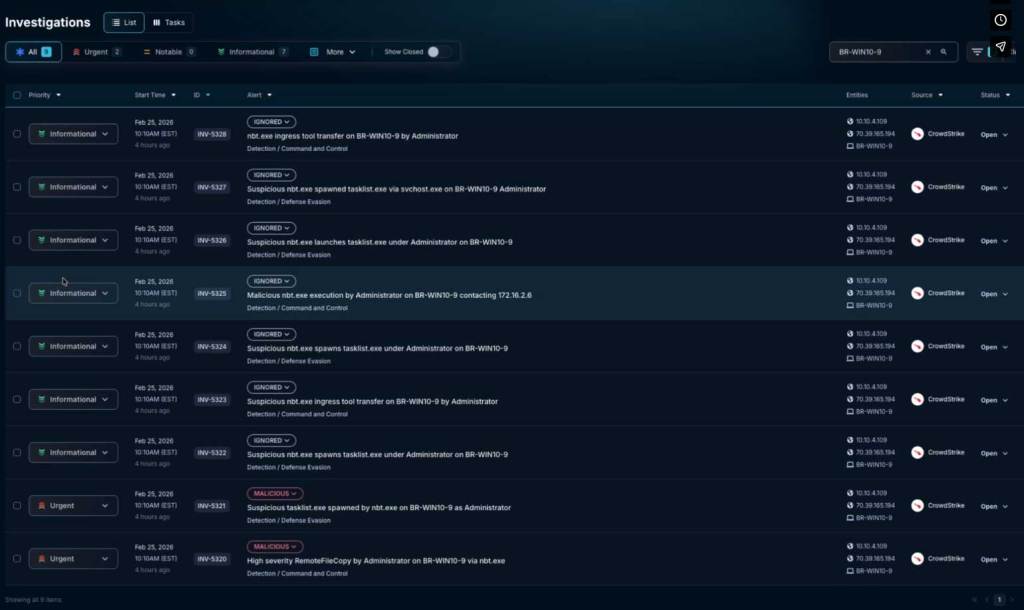

AI agents rarely fail in clean lab environments. They fail in real security operations with noisy SOC workflows, under adversary pressure, and across complex escalation paths.

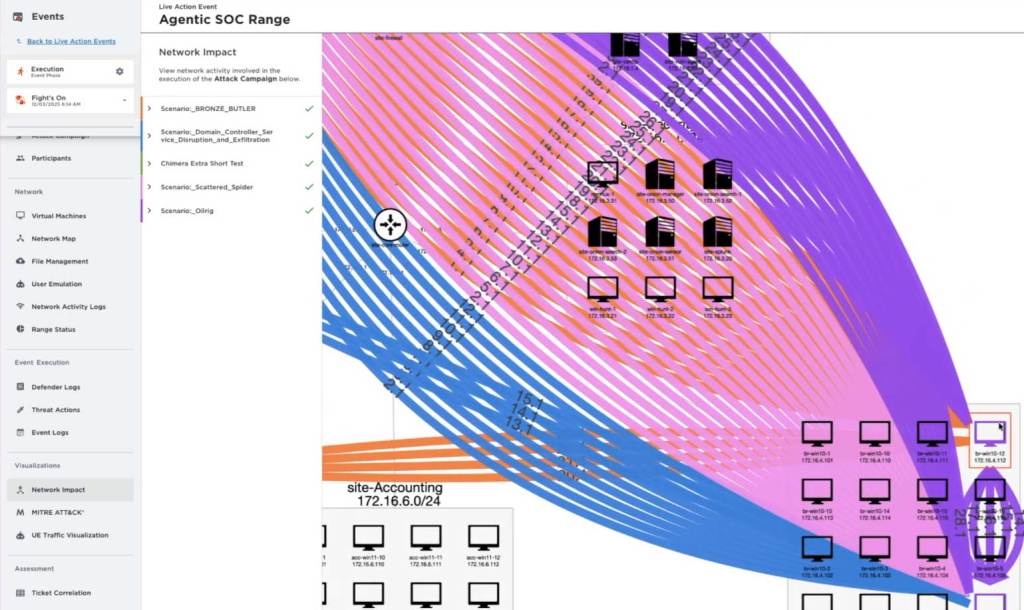

SimSpace provides the enterprise AI Proving Grounds where organizations conduct rigorous AI agent testing alongside human operators against realistic operational conditions. Measure performance using meaningful AI agent evaluation metrics, identify failure modes, and validate AI agents before deployment.

Before your AI reaches production, you know exactly how it performs.

The AI Agent Testing and Validation Lifecycle

Train AI Agents Alongside Human Operators

Test and Evaluate AI Agents Under Live-Fire Conditions

The SimSpace Cyber Range Platform

Train

AI Models

Accelerate innovation with synthetic data and live-fire simulations that make your AI models smarter, faster, and more predictive.

- Generate realistic data with real threat context

- Continuously test, benchmark, and adapt models

- Validate AI resilience before real-world use

Test

AI Agents

Test AI performance against live-fire threats to separate what works from what fails—before it reaches production.

- Deploy AI agents in realistic SOC conditions

- Benchmark and continuously improve AI agent performance

- Validate agent resilience before deployment

Validate Agentic Workflows

- Validate AI agents in realistic SOC environments

- Benchmark AI agents alongside human analysts

- Build continuous improvement into every agentic workflow